Why AI Systems Need Continuity Without Creating Liability

An AI system can be useful even without memory, but an AI system with unmanaged memory can be dangerous.

As organizations use agentic AI for more than one-off tasks, they find that the moment their agents need context across sessions, memory stops being a nice-to-have feature and becomes an architectural responsibility. What agents remember, how long they remember it, where that memory lives, and who controls it determine whether AI systems learn effectively or drift silently into compliance violations.

Memory and state management (the patterns that govern how AI agents retain, access, update, and discard information over time) transform AI from stateless responders into systems capable of consistency and learning. However, memory introduces risk as quickly as it introduces value. Problems like context drift, data leakage, implicit learning, compliance violations, and debugging blind spots accumulate quietly until they cause issues in production.

If your AI system runs over time, memory isn’t optional. It’s either a planned part of your system or a hidden risk.

What Memory and State Management Actually Do

The memory and state management pattern answers critical questions about information persistence in agentic systems: What does the agent remember from past interactions? What context persists across workflow steps or sessions? What information is shared across multiple agents? What information must be forgotten to comply with privacy requirements? How is memory scoped, secured, and audited?

This pattern transforms AI from a disconnected tool into a system capable of continuity and informed decision-making, but only when memory is designed intentionally rather than allowed to emerge organically.

McKinsey’s October 2025 research on CEO strategies for agentic AI emphasizes that breakthroughs in model and system capabilities now include vastly larger and more precise short-term and long-term memory structures that improve both recall breadth and precision. These capabilities enable agents to personalize interactions with external customers and internal users, but they also create enterprise governance challenges that did not exist in stateless AI systems.

Why Memory Changes the Nature of AI Systems

Without memory, every interaction starts from scratch. The agent has no context, no history, and no ability to learn from previous decisions.

With memory, agents don’t repeat finished work. Context moves with them from task to task, decisions use past patterns, and the system feels connected instead of fragmented. AI can then act as part of ongoing processes, not just as a stand-alone tool.

This continuity is essential for enterprise workflows such as long-running processes spanning days or weeks, case management that requires historical context, ongoing investigations that accumulate evidence over time, customer or employee support that must maintain relationship context, and multi-stage delivery pipelines where context from earlier stages informs later decisions.

Memory enables AI to behave like a team member working toward objectives over time, not just a tool that responds to individual queries.

The Difference Between Memory and State

People often confuse the concepts of memory and state, but in agentic systems, they play very different roles.

Enterprise systems need to clearly manage both state and memory. Mixing them up causes confusion, errors, and risk. State management ensures workflow progress, while memory management ensures agents learn and improve over time without creating compliance exposure.

Common Types of Memory in Agentic Systems

Each type of memory needs its own rules for how long to keep data, how to store it, and who can access it.

Why Retrieval-Augmented Generation Is Not Sufficient

People often think retrieval-augmented generation (RAG) is the same as memory, but it isn’t.

RAG retrieves relevant information on demand from external knowledge sources and grounds responses in authoritative data at query time. This improves accuracy and reduces hallucinations, but it does not provide continuity.

Memory preserves continuity across interactions, tracks progress through multi-step workflows, maintains context across sessions and agents, and records decisions and their outcomes for future reference.

RAG is just one part of memory management, not a substitute for it. Enterprises that rely solely on RAG struggle with continuity and traceability. Agents can’t learn from experience, context disappears between sessions, and debugging becomes nearly impossible because there is no record of why decisions were made.

McKinsey’s June 2025 research on the agentic AI advantage notes that despite their power, first-generation large language models have been fundamentally reactive and isolated from enterprise systems, largely unable to retain memory of past interactions. This limitation constrained their deployment at enterprise scale. Agentic AI addresses this limitation through structured memory management, but only when memory is treated as a governed system component.

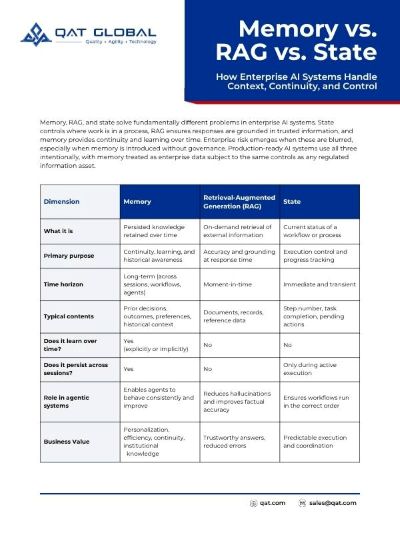

Bonus Resource: Memory vs. RAG vs. State

Understand the differences between memory, retrieval-augmented generation (RAG), and state—and when to use each. This download breaks it down in a practical, easy-to-apply way.

The Hidden Risks of Unmanaged Memory

Memory introduces enterprise risk that rarely appears immediately. These risks accumulate quietly in production environments.

Why Memory Must Be Designed, Not Discovered

Many teams allow memory to emerge organically through accumulated prompt history, sprawling conversation logs, or vector stores deployed without governance. This approach does not scale and creates hidden liability.

Enterprise memory must be intentional in what gets remembered and what gets forgotten, scoped to appropriate boundaries (user, tenant, function), observable so teams can inspect and validate memory contents, governed by clear retention and deletion policies, and auditable with full traceability of memory updates and access.

If memory exists in your agentic system, it must be treated as part of the system architecture, not a side effect of implementation choices. Memory is enterprise data, and it requires the same governance, security, and compliance controls as any other enterprise data asset.

Forrester’s August 2025 research on data governance solutions emphasizes that governance has evolved from a compliance-focused discipline into the control plane for trust, agility, and AI at enterprise scale. The evaluation of leading providers shows a clear market shift toward agentic AI governance systems that actively automate policy enforcement and remediation while keeping humans in the loop. Memory management is a core component of this governance transformation.

How Memory and State Fit into Production-Ready Agentic Systems

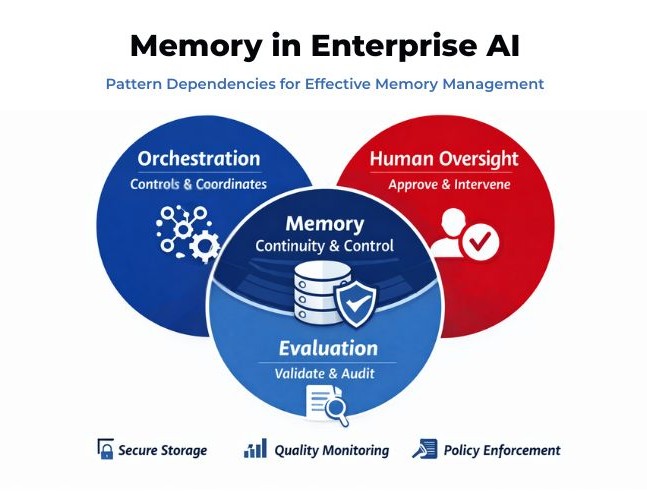

In mature enterprise architectures, memory and state management are tightly integrated with orchestration, which controls access to memory and enforces boundaries; evaluation, which validates memory use against quality and compliance standards; human-in-the-loop controls, which govern sensitive memory retention and deletion decisions; security and compliance frameworks that enforce data protection and privacy requirements; and event-driven logic, which updates state predictably in response to workflow transitions.

Memory is not a free-form knowledge pool that agents access without restriction. It is a managed system component with explicit policies, access controls, and oversight mechanisms.

When Memory Is Essential and When It Should Be Limited

Memory is essential when work spans multiple steps or sessions where context accumulation improves performance, historical context materially affects current decisions, agents must collaborate over time and share accumulated knowledge, or auditability requires maintaining records of decisions and their rationale.

Memory should be limited or avoided when tasks are:

- One-off and low-risk with no future context value.

- Data sensitivity is high, and reuse creates compliance exposure.

- Determinism is required, and historical context introduces unpredictability.

- Regulatory constraints demand minimal retention with aggressive deletion policies.

The most dangerous systems are those with memory that they do not acknowledge. Implicit memory creates all the risks of managed memory without any of the controls.

What QAT Global Has Learned About Memory and State

AI systems should remember what helps the business and forget what puts it at risk. Memory is powerful only when it is governed. Intelligence without continuity limits value, but memory without control leads to risk.

Too many organizations discover memory management failures in production: during audits when they cannot demonstrate compliance with data retention policies, after privacy incidents when sensitive information persisted beyond its authorized lifetime, or when debugging critical failures with no historical context to reconstruct agent reasoning. The organizations that succeed with memory-enabled agentic AI in 2026 are those who recognized early that memory is not a feature you add to agents; it is a system capability you design, govern, and audit from the start.

McKinsey’s November 2025 State of AI report found that 62% of organizations are at least experimenting with AI agents, but most remain in pilot mode because they cannot manage the complexity that memory and state introduce. The gap between pilot success and production readiness is often a memory governance gap: systems work well in controlled experiments but fail when memory accumulates, context drifts, or compliance requirements surface.

As discussed in our overview of essential agentic workflow patterns, enterprise-ready AI is not built by perfecting individual patterns in isolation. Memory and state management become fully effective only when integrated with orchestration (which controls what agents can remember), evaluation (which validates that memory improves rather than degrades performance), and human oversight (which intervenes when memory-based decisions exceed confidence thresholds).

Bonus Material: Memory Risk Signals Checklist

Want a quick way to spot hidden risks in your system? Download this practical checklist to identify early warning signs and strengthen reliability before issues escalate.

What Comes Next in the Series

With orchestration establishing authority and memory providing continuity, enterprise AI systems gain the foundational capabilities for long-term operation. The next challenge is accountability: deciding when AI should act independently, when humans must intervene, and how confidence, risk, and escalation are designed into agentic workflows.

In the next article, we explore the Human-in-the-Loop Control Pattern: how enterprises determine the boundary between autonomous action and human judgment, why that boundary must be explicit rather than implicit, and how to design escalation mechanisms that preserve both efficiency and accountability.

Memory gives AI continuity. Human oversight gives it legitimacy.

Memory fixes the issue of keeping context. The next step is to address accountability.

Ready to Use AI Without the Guesswork?

You want to move faster, deliver better software, and keep control over quality, security, and outcomes. But with so much AI hype, it’s hard to know what actually works, what’s risky, and where to start.

That’s where QAT Global comes in.

For nearly 30 years, we’ve helped organizations navigate major shifts in software development. Today, we guide teams in applying AI where it delivers real value, accelerating delivery, reducing friction, and improving ROI, without sacrificing governance or trust.

If you’re exploring AI and want a clear, practical path forward, let’s talk.

Schedule a conversation with QAT Global and take the next step with confidence.

- Why AI Systems Need Continuity Without Creating Liability

- What Memory and State Management Actually Do

- Why Memory Changes the Nature of AI Systems

- The Difference Between Memory and State

- Common Types of Memory in Agentic Systems

- Why Retrieval-Augmented Generation Is Not Sufficient

- The Hidden Risks of Unmanaged Memory

- Why Memory Must Be Designed, Not Discovered

- How Memory and State Fit into Production-Ready Agentic Systems

- When Memory Is Essential and When It Should Be Limited

- What QAT Global Has Learned About Memory and State

- What Comes Next in the Series

- Ready to Use AI Without the Guesswork?