The Ceiling Most AI Programs Hit

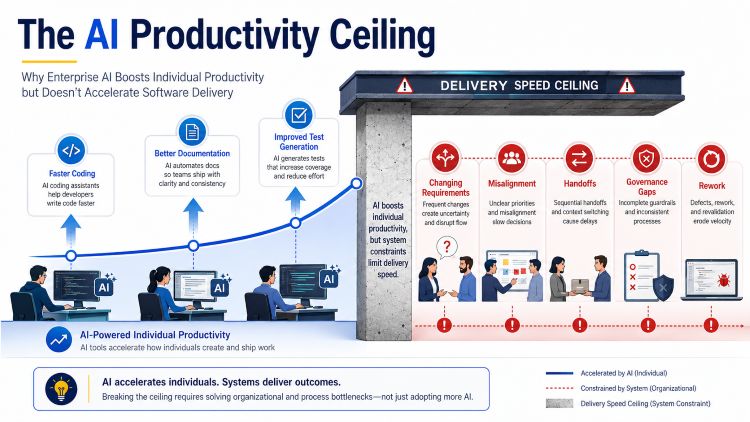

When you approve the AI budget, no one mentions this: the productivity gains are real, but they aren’t enough. In companies using AI coding assistants and generative tools over the past two years, developers write code faster, documentation cycles are shorter, and test generation has improved. The pilot programs matched what leaders expected, and the ROI stories made sense in the boardroom.

Yet, most technology leaders found out after a year or so that none of this sped up delivery. Release cycles stayed about the same. Time-to-market didn’t really improve. Roadmaps still slipped. According to McKinsey’s December 2025 “State of AI” report, 78% of organizations had added generative AI to at least one area, but fewer than 20% saw real improvement in overall delivery speed. The tools work, but the system around them does not.

This is the ceiling most enterprise AI programs hit, and understanding it requires letting go of the comforting assumption that making individuals faster will automatically speed up the whole organization. That assumption has cost companies a lot.

The Real Constraint Was Never Coding Speed

In mid-sized and large companies, software delivery doesn’t slow down because engineers code too slowly. The real issues are late or changing requirements, misalignment between business and engineering, documentation that falls behind, and unclear handoffs. According to Gartner’s 2025 AI Adoption Maturity Survey, 67% of enterprise IT leaders said process fragmentation and handoff failures were their main delivery problems, not developer speed.

If AI is only used for coding, all those problems stay the same. Engineers write code faster, but the system wasn’t changed to handle it. Faster work at one step doesn’t speed up the whole process if the rest of the workflow is slow. The bottleneck just moves further along, or it becomes more obvious.

This is a structural problem that most AI programs don’t address. The tools focus on helping individuals, but the real delivery challenge is at the organizational level.

Three Levels of AI Maturity and Why Most Organizations Are Stuck at One

Forrester’s October 2025 “AI Integration Maturity” framework describes three distinct levels of enterprise AI adoption that have become a useful diagnostic for organizations trying to understand where their programs actually stand.

At the first level, called “AI-Assisted” by Forrester, AI helps individuals with tasks like code suggestions, debugging, test generation, and drafting documentation. This is how most companies use AI today, and it does provide real value. Developers save time, and organizations see some productivity gains. But the main limitation is structural. AI at this stage only works on isolated tasks and doesn’t see the bigger delivery picture. The workflow stays fragmented, and the real delivery problems remain.

At the second level, called “AI-Driven,” AI moves from helping with individual tasks to running coordinated workflows throughout the delivery process. Requirements are structured and turned into managed artifacts. Architecture decisions are checked in context. Code is generated from clear inputs. Testing, documentation, and validation are not added at the end, but are built into the workflow. Companies at this level see what draft AI frameworks call “system-level gains”: less rework, faster feature cycles, and better delivery predictability. According to McKinsey’s November 2025 analysis on software delivery transformation, organizations using AI at the workflow level saw up to 40% shorter feature cycle times compared to those using AI only for tasks.

The third level, called “AI-Native” by both Gartner and Forrester, puts AI directly into products and systems. This includes things like autonomous decision-making, continuous learning in production, and AI-driven customer experiences. Reaching this level needs special governance and a mature organization. For most companies as of March 2026, this isn’t the main goal yet. The real opportunity is moving from Level 1 to Level 2.

So, if Level 2 brings the real benefits, why are most organizations still stuck at Level 1?

The answer is that moving from AI-Assisted to AI-Driven requires something most organizations have been unwilling to do: redesign how delivery work is structured. Level 1 adoption required no change to existing workflows. A developer added a copilot and kept working the same way. Level 2 requires examining the handoffs, the requirements process, the governance model, and the accountability structure and rebuilding them around AI execution rather than AI assistance. That is a different kind of investment, and most organizations have not made it yet.

This is also why companies investing in the same tools are seeing very different outcomes. Some report marginal productivity gains that plateau after six months. Others are compressing delivery timelines and increasing throughput without adding headcount. The difference is not the tools. It is how those tools are integrated into the delivery system. One group optimized for adoption. The other redesigned for execution.

Why Enterprise AI Programs Stall and Stay There

The truth is most companies never planned for AI to work at the system level. They aimed to make individual work easier, and that worked. But moving to coordinated workflows means rethinking how delivery is structured, and most organizations have avoided that.

AI came into companies in the simplest way possible. Developer copilots, chat tools, and standalone productivity apps were easy to buy, test, and explain. They gave quick, clear value without changing how teams worked together. Existing workflows and handoffs stayed the same. AI was just added on top of old processes that weren’t updated for it.

Concerns about governance made this pattern even stronger. IT leaders were right to worry about data privacy, compliance, and quality. But this caution turned into a structural barrier. AI use stayed limited because there was no framework for using it across workflows. Most companies set up rules for AI tools but didn’t create frameworks for using AI throughout their workflows.

The vendor market made things harder. Most AI tools for software development focus on features like better code completion, faster generation, and smarter models. Few tackled the bigger issue of how AI should work across the whole software delivery process, where the real problems are. Companies chose the tools that were available, not the ones that would solve their actual needs.

The Architecture of a System That Actually Accelerates Delivery

To break through this limit, you need a different kind of architectural thinking. One focused on designing the system so that AI can actually work as intended.

The best use of AI in software delivery is in clearing up confusion before coding starts. When requirements are vague paragraphs, they lead to rework later. This leads to misalignment between business goals and engineering work, forcing developers to guess rather than build. Every hour of unclear requirements leads to much more time spent fixing things later. Using AI early in the process has a bigger impact than using it just for coding.

Top delivery teams know that speed comes from good structure, not individual effort. The same goes for AI. If AI just responds to individual prompts, its results depend on the quality of those prompts. However, when AI operates within a well-designed, coordinated workflow with clear roles and handoffs, its output improves as the design of the system evolves.

This is where multi-agent architecture matters. Instead of using one general model for everything, leading companies use specialized agents for specific tasks: one handles business requirements, another sets architectural rules, another writes code, and another tests against set criteria. Each agent works within clear boundaries and rules. Handoffs between agents are clear and can be tracked. And most importantly, humans are in the loop throughout the entire process.

In practice, this means requirements aren’t just paragraphs in a ticket anymore. They become structured items, created and checked by AI agents and humans before any code is written. Acceptance criteria are set, ambiguity is found and fixed, and the scope is clear. When development starts, the input is well-defined, not open to interpretation. This change alone removes a type of rework that most teams used to think was unavoidable.

In high-performing teams, this changes the first 48 hours of a feature. Instead of engineers spending days discussing intent in Slack or backlog comments, AI-generated requirements, acceptance criteria, and architectural rules are defined and verified before coding begins. What used to take several rounds of clarification now happens once, up front, with structure. Engineers stop guessing and start building. The conversation moves from “what did you mean by this?” to “here’s what we’re delivering and why.”

The result is that requirements that used to take weeks to sort out can now be structured and checked in just hours. Feature cycle times get shorter because ambiguity is fixed early, not during coding. Most enterprise teams think rework is normal, but it isn’t. Rework happens when confusion enters the process early and grows at each stage. Using AI combined with human governance at the requirements stage removes that confusion before it spreads, cutting out whole categories of work that would have taken weeks. According to a January 2026 Gartner research note on AI delivery orchestration, companies testing multi-agent software delivery frameworks saw 35% to 50% shorter requirements-to-deployment times in early production.

This isn’t just theory. It’s what happens when AI is used to solve the real problems in the delivery system, not just the obvious ones.

What Enterprise Leaders Get Wrong About AI ROI

One of the biggest mistakes in enterprise AI investment is confusing productivity metrics with delivery metrics. They aren’t the same and mixing them up has led companies to claim success when they should be looking for problems.

Most companies have already seen the easy wins. The 20% to 30% boost in individual productivity from AI coding assistants has mostly been achieved by early adopters. The rest of the value—about 70%—comes from how work moves through the system: how requirements are set, how handoffs are managed, and how confusion is removed before it causes rework. That’s where companies pull ahead, and it takes a different kind of investment than just buying better tools.

Improvements in individual productivity are real and valuable. They lower the cost per task, make developers’ jobs better, and free up time for more important work. But they don’t measure how well the delivery system works. If every developer is 30% faster, but it still takes three weeks to move from requirements to architecture, the company hasn’t really improved its delivery speed.

The real ROI from AI at the system level comes from shortening the whole process, not just speeding up individual steps. Forrester’s Q4 2025 analysis of enterprise AI investment patterns found that companies measuring AI ROI at the workflow level, instead of just the task level, saw three to four times more business impact for their investment than those measuring only individual productivity. How you measure results shapes your investment strategy, which, in turn, shapes your outcomes.

Organizations that have moved past this ceiling track the right metrics: total feature cycle time, time from requirements to deployment, rework rates, and how predictable releases are. These numbers tell a different story than just counting lines of code per developer each day.

The QAT Global Perspective

We build and run AI-integrated delivery systems that put AI directly into requirements, architecture, development, and validation workflows. This isn’t an extra layer on your current process, but a full redesign of how work moves through your system, with AI working within clear structures rather than just reacting to one-off prompts. In our experience, the pattern is clear. AI investments are real, tool adoption is real, and individual productivity gains are real, but so is the gap between those gains and actual delivery improvement. That gap is structural, not just a one-off issue, and our approach is built to close it.

The competitive advantage now lies in how well the delivery systems are designed to execute with AI. Organizations doing this well are separating from firms that are stuck at the individual productivity layer, and the gap widens as workflow-level AI matures. The firms that recognize this shift and act on it now will be significantly harder to catch in twelve months.

Organizations that have moved past this ceiling have one thing in common: they stopped focusing on making developers faster and started looking for where their delivery system breaks down. They mapped out handoffs, found where confusion builds up, and identified where rework happens. Then they built AI integration around those problem areas, not just the easiest or most obvious places.

This is tougher than just adding a copilot tool. It takes someone willing to look honestly at the delivery setup, find the real constraints, and build a governance model so AI can work across workflows without adding new risks. The organizations that have done it are reporting real competitive separation, and the gap between them and organizations still at Level 1 is widening.

How to Diagnose Where You Are Today

Before you invest more in AI, take thirty minutes to answer these questions honestly:

- Are your requirements structured artifacts with defined acceptance criteria, or are they interpreted paragraphs that engineers have to decode?

- Does AI operate across your delivery workflows, or only within individual tools that don’t share context?

- Are outputs from each stage validated before the next stage begins, or is validation performed after development?

- Are you measuring end-to-end cycle time and release predictability, or individual developer productivity?

- When rework occurs, does it trace back to a coding error or to ambiguity introduced upstream?

If you chose the second option for most of these, you’re still at Level 1. The ceiling you’re hitting is a system design problem, and it can be fixed.

What Comes Next

The next article in this series will look at the governance structures that set AI-assisted organizations apart from AI-driven ones. It will cover how top companies build requirements frameworks, validation checkpoints, and accountability systems so multi-agent setups work reliably in real delivery environments. Seeing what goes wrong when AI runs without governance is the quickest way to see what good governance should do.

Work With QAT Global

If your company has invested in AI but hasn’t seen faster delivery, it’s time to look at your system’s architecture before adding more tools. QAT Global helps enterprise tech teams design AI-integrated delivery systems that work at the workflow level, not just the task level. Our AI-powered software development and custom engineering services follow the same principles discussed here: structured workflows, clear inputs, and accountability at every stage.

We’ve helped organizations in industries like healthcare, finance, manufacturing, and technology move from AI-assisted to AI-driven delivery. We know what sets successful programs apart from those that stall. If you’re ready to talk, visit qat.com/artificial-intelligence-ai or check out our Diamond AI Solutions framework at qat.ai/diamond-ai.

The gap between organizations operating at the tool layer and those operating at the workflow layer is widening quickly. As multi-agent delivery models mature, that gap will not close on its own. It will compound.

The question now is whether AI is working at the level your business truly needs.

- The Ceiling Most AI Programs Hit

- Three Levels of AI Maturity and Why Most Organizations Are Stuck at One

- Why Enterprise AI Programs Stall and Stay There

- The Architecture of a System That Actually Accelerates Delivery

- What Enterprise Leaders Get Wrong About AI ROI

- The QAT Global Perspective

- How to Diagnose Where You Are Today

- What Comes Next

- Work With QAT Global