The ReAct Pattern: Why Adaptability Makes AI Agents Powerful (And Why Enterprises Must Contain It)

The ReAct pattern is often the point at which AI agents begin to demonstrate adaptive autonomy, materially increasing both operational capability and risk visibility for executive stakeholders.

By alternating between reasoning and action, ReAct agents can explore uncertainty, gather missing information, adapt their approach mid-task, and make progress without a predefined script. This makes them far more capable than static, prompt-driven systems that follow rigid paths. It also makes them significantly harder to control.

Here’s what organizations discover once ReAct agents move from pilot to production: the adaptability that makes them valuable in uncertain environments is the same adaptability that makes them unpredictable in governed ones. Unpredictability in enterprise systems is unmanaged risk, not innovation.

What ReAct Actually Does

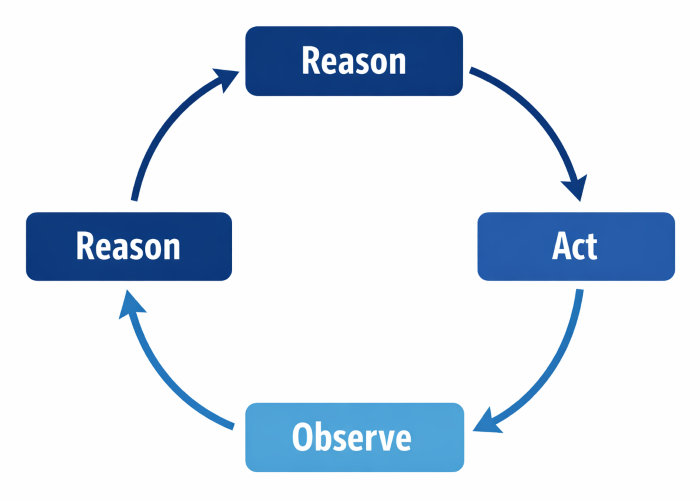

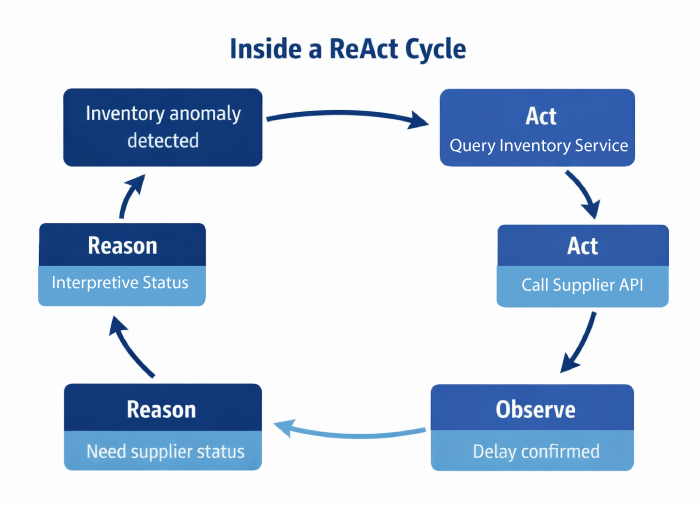

ReAct stands for Reason and Act. In this pattern, an AI agent alternates between reasoning about the current situation, taking an action, observing the result, and reasoning again about what to do next. Instead of planning everything up front or blindly executing predetermined steps, the agent continuously adapts based on what it learns through interaction.

This mirrors how humans solve complex problems. We assess what we know, try something, see what happens, and adjust. ReAct brings that same iterative loop into AI workflows.

For many teams, ReAct is the first pattern that makes AI feel truly agentic rather than merely responsive.

Why ReAct Delivers Results (When Conditions Allow)

ReAct excels in environments where information is incomplete, conditions change during execution, the correct path isn’t obvious from the start, and discovery is required to move forward.

By reasoning between actions, the agent selects tools based on context and evaluates outcomes in real time. If a query fails, it can refine the parameters, shift strategies, stop unproductive paths, and adapt to unexpected results.

Gartner’s August 2025 Hype Cycle report defines AI agents as autonomous or semi-autonomous software entities. These agents use AI techniques to perceive their environment, make decisions, take actions, and achieve goals in digital or physical settings. ReAct-style reasoning is central to their ability to adapt in real time.This makes ReAct especially effective for research where the information landscape must be explored. It is also valuable in investigations where root causes aren’t immediately obvious, troubleshooting where standard playbooks fail, and exploratory workflows where the optimal path emerges through experimentation.

For many teams, ReAct represents their first encounter with AI that doesn’t just follow instructions but actually figures things out.

Where ReAct Is Operating in Enterprise Systems Today

Agents explore unfamiliar problem domains by searching available sources, summarizing findings, validating information against multiple references, and refining understanding step by step.

Agents assist humans by evaluating evolving inputs, considering multiple factors in real-time, and recommending next actions rather than delivering static answers disconnected from context.

Gartner predicts that by 2028, at least 15% of day-to-day work decisions will be made autonomously through agentic AI, up from 0% in 2024. ReAct-style reasoning can enable much of this autonomous decision-making capability.

In all of these cases, adaptability is the primary value ReAct delivers. That same adaptability becomes the primary governance challenge.

The Hidden Cost Nobody Mentions

ReAct’s adaptive reasoning is powerful, but it introduces risk in ways that don’t become apparent until you scale. Because the agent selects actions based on intermediate reasoning steps, outcomes are harder to predict, reproduce, and tightly constrain. Post-hoc explainability can also become challenging in environments where accountability matters. Common enterprise concerns that surface in production include:

- Non-deterministic behavior across runs where the same input produces different action sequences.

- Difficulty explaining why a particular action was taken when auditors or compliance teams ask.

- Overuse of tools while exploring alternatives, driving up costs without proportional value.

- Infinite or inefficient reasoning loops that consume resources without making meaningful progress.

- Actions taken based on flawed intermediate assumptions that aren’t validated before execution.

In small experiments with forgiving stakes, these issues are tolerable. In production systems touching customer data, financial transactions, or regulated processes, they are not.

Why Transparency Doesn’t Equal Governance

One of ReAct’s selling points is transparency. The agent explicitly reasons about its next step, making its decision process visible.

This transparency is genuinely useful for debugging logic errors, understanding unexpected behavior, improving prompts and system design, and building team confidence in AI capabilities.

However, visible reasoning does not equal governance. Seeing why an agent chose an action does not prevent it from choosing risky or inappropriate ones. Enterprises must separate observability from control.

McKinsey’s November 2025 State of AI report found that while 88% of organizations use AI in at least one function, only 39% report EBIT impact at the enterprise level, with governance gaps being a primary factor limiting value capture as AI systems scale.

The organizations succeeding with agentic AI understand that transparency enables trust, but governance creates it.

Why ReAct Fails at Enterprise Scale Without Structure

ReAct is designed for exploration. That flexibility is its strength. But in enterprise environments, exploration without boundaries becomes a failure mode.

When deployed without architectural guardrails, ReAct systems often exhibit predictable breakdowns:

Gartner’s June 2025 prediction that half of business decisions will be augmented or automated by AI agents by 2029 underscores the urgency of this issue. At that scale, adaptive reasoning must operate within governance frameworks that preserve reliability.

These failure modes erode trust—even when individual outputs seem reasonable. In regulated environments where consistency, auditability, and predictability are requirements, not preferences, unmanaged ReAct systems may be disqualified from deployment altogether.

ReAct vs Planning: Understanding the Right Tool for the Job

ReAct and planning patterns are often contrasted, but they serve fundamentally different purposes and excel in different contexts.

Planning works best when the problem space is well understood, dependencies between steps are known in advance, and steps can be sequenced reliably with confidence in outcomes.

ReAct works best when information must be discovered through interaction, the environment is uncertain or dynamic, feedback fundamentally changes the optimal path forward, and rigid plans would fail due to unforeseen conditions.

Enterprises frequently need both. The mistake organizations make is using ReAct everywhere simply because it feels more intelligent or modern, rather than matching the pattern to the problem characteristics.

Gartner’s research on AI agent development frameworks emphasizes that key capabilities include planning and reasoning (breaking down goals into steps), with successful enterprise implementations combining these capabilities appropriately rather than defaulting to one pattern for all scenarios.

| Tool Use | Planning | ReAct |

|---|---|---|

| Single decision | Plan first, execute later | Continuous loop |

| One action | Many actions | Adaptive actions |

| Assumes predictability | Assumes stability | Assumes uncertainty |

| Minimal feedback | Delayed Feedback | Immediate feedback |

How ReAct Fits into Production-Ready Systems

In mature enterprise architectures, ReAct is never deployed as an unchecked capability. It’s typically combined with orchestration to define reasoning boundaries and stopping conditions, tool governance to limit what actions are allowed during exploration, evaluation frameworks to determine when sufficient progress has been made, human-in-the-loop controls for high-impact decisions that exceed agent authority, and memory systems to avoid repeating unproductive reasoning paths.

ReAct becomes a constrained capability inside a broader governed system, not an autonomous explorer with unlimited agency.

The organizations successfully deploying ReAct at scale are those who understand it as one tool in an architectural toolkit, not a replacement for structured thinking about when adaptability adds value and when it introduces risk.

When ReAct Is Strategic (And When It’s Dangerous)

ReAct works best when the problem space is genuinely unknown or highly variable, discovery through interaction is the only viable path forward, intermediate feedback meaningfully improves outcomes, and human-level adaptability justifies the governance overhead.

ReAct is a poor fit when determinism is required for compliance or user experience, actions have irreversible consequences that can’t be undone, costs must be tightly controlled with predictable resource usage, and compliance and auditability demand reproducible decision paths.

Understanding when not to use ReAct is as strategically important as knowing how to implement it effectively.

What QAT Global Has Learned About ReAct

At QAT Global, we view ReAct as a powerful but volatile pattern that demands careful architectural thinking.

Used within clear boundaries, it enables AI systems to operate effectively in complex, real-world environments where rigid workflows fail. Used without governance, it creates systems that are difficult to trust, manage, or explain to stakeholders who expect accountability.

Our approach centers on structure. We define explicit reasoning boundaries that limit what the agent can consider. We establish clear success and failure conditions to terminate reasoning loops. Tool access is tightly controlled to restrict exploration to safe operations. Human oversight is built in for decisions that exceed defined thresholds. And we integrate ReAct with planning and orchestration patterns to provide stability.

Many organizations deploy ReAct agents in production because they performed well in pilots. Only later do they discover that adaptability without governance creates more operational problems than it solves. The flexibility that impresses stakeholders in a demo becomes the unpredictability that fails an audit in production.

Adaptability is valuable only when it drives measurable business outcomes within acceptable risk parameters. Without that discipline, it is simply expensive unpredictability.

What Comes Next

ReAct focuses on adaptability in the moment, enabling agents to navigate uncertainty through iterative reasoning and action. The next foundational pattern focuses on structure before execution begins.

In the next article in this series, we explore the Planning Pattern: how agents break goals into steps, manage dependencies, coordinate work across multiple actions, and why planning becomes essential as AI systems take on larger, multi-stage responsibilities where failure at any step undermines the entire workflow.

Understanding planning is critical for enterprises that need predictability alongside intelligence, especially as AI systems move from handling individual tasks to orchestrating complex business processes.

The organizations succeeding with ReAct understand it’s never deployed alone. QAT Global architects agentic systems that combine ReAct’s adaptability with planning’s structure, tool governance, and evaluation frameworks. Our AI-augmented development approach means we don’t just advise on patterns, we build production systems that prove the architecture works in your environment. Talk to Our AI Architects

- The ReAct Pattern: Why Adaptability Makes AI Agents Powerful (And Why Enterprises Must Contain It)

- The Hidden Cost Nobody Mentions

- Why Transparency Doesn’t Equal Governance

- Why ReAct Fails at Enterprise Scale Without Structure

- ReAct vs Planning: Understanding the Right Tool for the Job

- How ReAct Fits into Production-Ready Systems

- When ReAct Is Strategic (And When It’s Dangerous)

- What QAT Global Has Learned About ReAct

- What Comes Next